A ‘broadband service’ was defined by the standards group CCITT in 1988 as data transmission above the region of 1.5 to 2 Mbit/s. Since then, broadband internet connections have become a ubiquity in our daily lives. They dominate our phone line considerations and, through always-on connected media devices, are encroaching in to the rest of the house as well.

A ‘broadband service’ was defined by the standards group CCITT in 1988 as data transmission above the region of 1.5 to 2 Mbit/s. Since then, broadband internet connections have become a ubiquity in our daily lives. They dominate our phone line considerations and, through always-on connected media devices, are encroaching in to the rest of the house as well.

Many governments have pledged to improve their nations’ internet access structure in the coming years. The per-capita data transmission rate – in bits per second (typically ‘mega’, or ‘millions of’ bits per second) – is a key indicator of how successful this infrastructure development has been. So what’s driving this need, and what technologies are out there to support it?

.

Why we need more speed

When the term ‘broadband service’ was coined in 1988, the world was quite a different place. Our personal number of always-on devices has risen dramatically. Through the age of the desktop PC, the laptop, and now to fully-mobile tablet and smartphone devices, we’ve seen a surge in the data transmission capacity required per capita. Households – our traditional form of receiving broadband – often deploy WiFi networks, and the number of home entertainment devices that demand access to them is increasing as well. Services such as OnLive are indicative of a trend away from client-side computation and towards remote, server-side computation – and that requires greater and greater communication bandwidths to support higher and higher-resolution content. Visual standards are increasing – higher resolutions, HD, ‘Super-HD’ – and they too will push the connectivity fold. On the enterprise side, cloud computing is becoming a daily affair. Server-side storage virtualisation is commonplace – along with the high bandwidth demands it requires – and services such as OnLive desktop and CloudOn indicate a move towards virtualised application layers as well.

So, the broadband landscape is shifting. Consumer-side, we’re seeing a demand for greater bandwidth. Enterprise-side, we’re seeing the same thing. So what technologies are in the pipeline to start supplying this demand?

How we’ll get our fix

Broadband has traditionally shared its communication space with phone lines. Back in the day, dial-up internet necessitated the use of the whole thing; calls were barred from invading the communication space. The development of Digital Subscriber Line (DSL) broadband allowed the two simultaneously – by using the high-frequency end of the line, the lower frequencies were reserved for telephone conversations.

DSL still looks promising for future developments. Though bandwidth in the past has traditionally been constrained to a maximum throughput of 20 Mbit/s, research in ‘Very-high-bit-rate Digital Subscriber Line’ (VDSL) technology has suggested data rates of up to 52 Mbit/s in both ‘upstream’ (uploading) and ‘downstream’ (downloading) directions. VDSL2 pushes this to 100 Mbit/s.

The experimental ‘DSL Rings’ apply DSL technology over existing copper phone lines to provide data throughput of up to 400 Mbit/s. However, the attenuation of signal is high – and data transmission rates drop off rapidly after around 300 metres of cable.

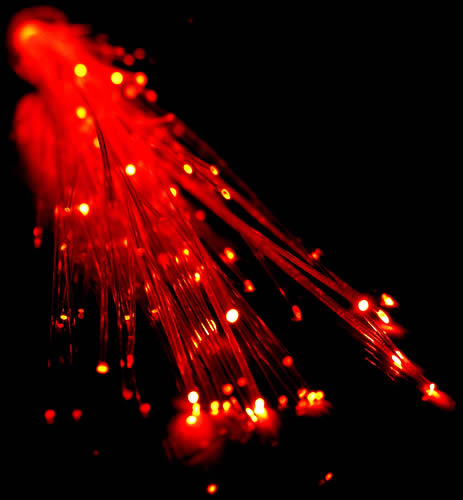

The more likely solution for the near future lies in ‘Fiber To The Home’ (FTTH) technology. Optical cables can carry broadband signals at up to 150 Mbit/s downstream, and over very long distances with little attenuation. They’re already used to carry signals across many countries worldwide (Australia recently having invested in a program to supply 93% of homes using FTTH technology), but tend to be converted to existing DSL cabling for supply to individual homes. FTTH looks to change that – and services across the world are getting on board, offering higher bit-rate transmission throughput via packages such as ‘fiber-optic broadband’, ‘optical broadband’ or the slightly more

marketised ‘infinity broadband’.

Of course, efforts are being made to supply wireless broadband communications more effectively. WiMAX offers a way to deliver ‘last mile’ (i.e. relay-to-premises) wireless broadband as an alternative to DSL cabling. LTE offers up to 300 Mbit/s download (theoretically), but rollout is slow and fragmented globally (the recent iPad’s 4G connectivity is limited to frequencies used in the US and numerous other countries, but not ubiquitously). LTE-Advanced suggests a theoretical throughput of 1 Gbit/s, but the technology is a little way off yet.

Efforts are being made in every sphere to meet the growing need for always-on connectivity. Most research and development departments believe that there’s a future to be had in both static, line-based broadband provision and mobile provision, and are willing to invest in both. What do you think? Do you think we’ll need greater investment in traditional, static data transmission, or in the mobile sphere? Drop a line in the comments to start the debate.